Google Chrome silently installs a 4 GB AI model on your device without consent

By theprivacyguy.com, Alexander Hanff.

Two weeks ago I wrote about Anthropic silently registering a Native Messaging bridge in seven Chromium-based browsers on every machine where Claude Desktop was installed [1]. The pattern was: install on user launch of product A, write configuration into the user's installs of products B, C, D, E, F, G, H without asking. Reach across vendor trust boundaries. No consent dialog. No opt-out UI. Re-installs itself if the user removes it manually, every time Claude Desktop is launched.

This week I discovered the same pattern, executed by Google. Google Chrome is reaching into users' machines and writing a 4 GB on-device AI model file to disk without asking. The file is named weights.bin. It lives in OptGuideOnDeviceModel. It is the weights for Gemini Nano, Google's on-device LLM. Chrome did not ask. Chrome does not surface it. If the user deletes it, Chrome re-downloads it.

This is, in my professional opinion, a direct breach of Article 5(3) of Directive 2002/58/EC (the ePrivacy Directive) [2], a breach of the Article 5(1) GDPR principles of lawfulness, fairness, and transparency [3], a breach of Article 25 GDPR's data-protection-by-design obligation [3], and an environmental harm of a magnitude that would be a notifiable event under the Corporate Sustainability Reporting Directive (CSRD) for any in-scope undertaking [4].

What is on the disk and how it got there

On any machine that has Chrome installed, in the user profile, sits a directory whose name is OptGuideOnDeviceModel. Inside it is a file called weights.bin. The file is approximately 4 GB. It is the weights file for Gemini Nano. Chrome uses it to power features Google has marketed under names like "Help me write", on-device scam detection, and other AI-assisted browser functions.

The file appeared with no consent prompt. There is no checkbox in Chrome Settings labelled "download a 4 GB AI model". The download triggers when Chrome's AI features are active, and those features are active by default in recent Chrome versions. On any machine that meets the hardware requirements, Chrome treats the user's hardware as a delivery target and writes the model.

The cycle of deletion and re-download has been documented across multiple independent reports on Windows installations [5][6][7][8] - the user deletes, Chrome re-downloads, the user deletes again, Chrome re-downloads again. The only ways to make the deletion stick are to disable Chrome's AI features through chrome://flags or enterprise policy tooling that home users do not generally have, or to uninstall Chrome entirely [5]. On macOS the file lands as mode 600 owned by the user (so it is deletable in principle) but Chrome holds the install state in Local State after the bytes are written, and as soon as the variations server next tells Chrome the profile is eligible, the download fires again - the architecture is the same, only the file permissions differ.

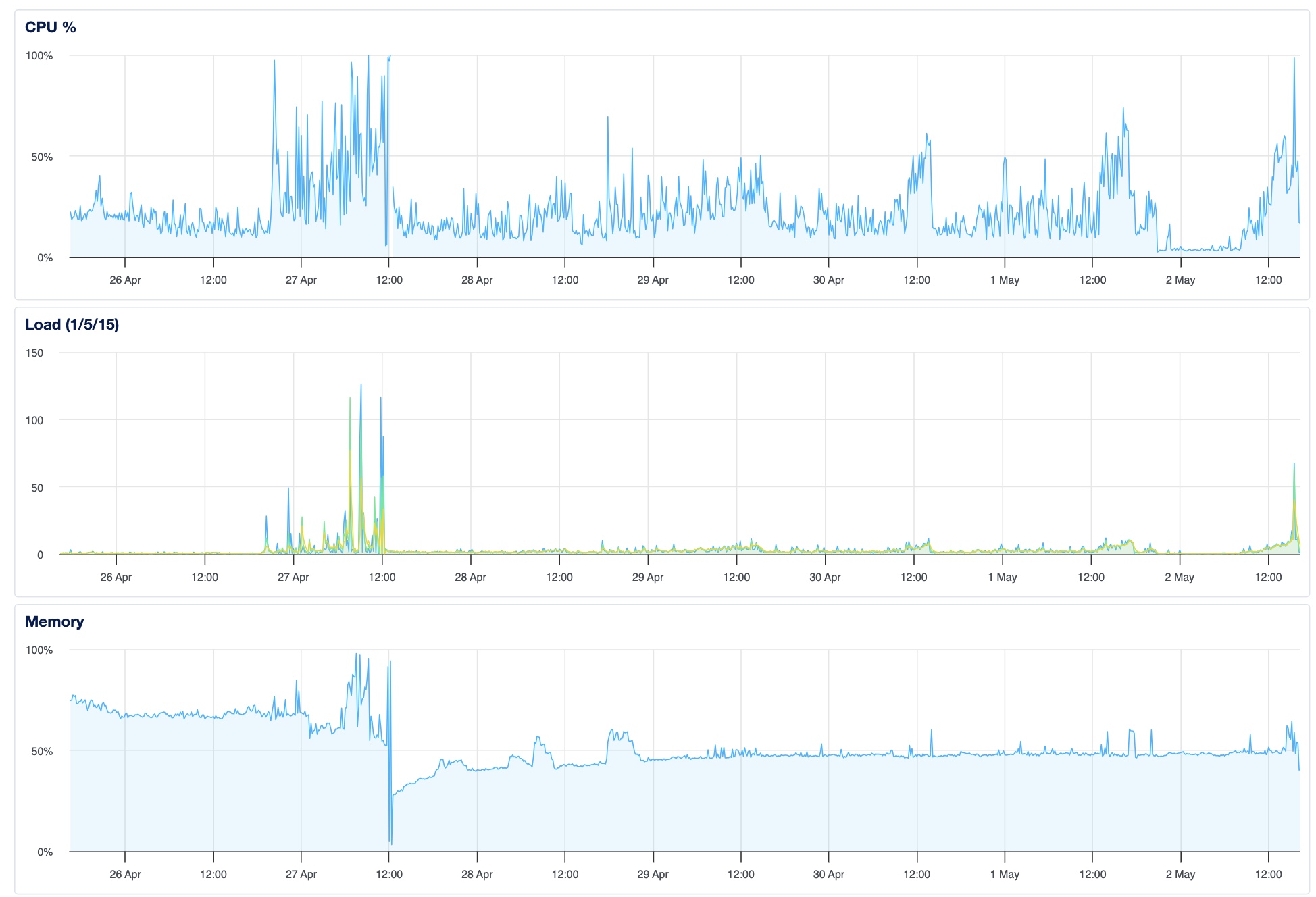

How I verified this on a freshly created Apple Silicon profile

Most of the existing reporting on this behaviour is from Windows users who noticed their disk filling up - useful, but Google could (and probably will) try to characterise those reports as anecdotes from non-representative configurations. So I went looking for a clean witness on a different platform.

The witness I found is macOS itself. The kernel keeps a filesystem event log called .fseventsd - it records every file create, modify and delete at the OS level, independent of any application logging. Chrome cannot edit it, Google cannot remotely reach it, and the page files that record the events survive the deletion of the files they reference.

I created a Chrome user-data directory on 23 April 2026 to run an automated audit (one of the WebSentinel 100-site privacy sweeps). The audit driver is fully Chrome DevTools Protocol - it loads a page, dwells for five minutes with no input, captures events, closes Chrome between sites - and the profile had received zero keyboard or mouse input from a human at any point in its existence. Every "AI mode" surface in Chrome was untouched - in fact every UI surface in Chrome was untouched, the audit driver only interacts with the document via CDP and the omnibox is never reached. By 29 April the profile contained 4 GB of OptGuideOnDeviceModel weights - and I knew it because a routine du -sh of the audit-profile directory caught it during a cleanup pass.

I went back to .fseventsd to ask exactly when those 4 GB landed. macOS gave me the answer, byte-precise, in three sequential page files:

- 24 April 2026, 16:38:54 CEST (14:38:54 UTC) - Chrome creates the

OptGuideOnDeviceModeldirectory in the audit profile (page file0000000003f7f339). - 24 April 2026, 16:47:22 CEST (14:47:22 UTC) - three concurrent unpacker subprocesses spawn temporary directories in

/private/var/folders/.../com.google.Chrome.chrome_chrome_Unpacker_BeginUnzipping.*/. One of them (5xzqPo) writesweights.bin,manifest.json,_metadata/verified_contents.jsonandon_device_model_execution_config.pb. The second writes a Certificate Revocation List update. The third writes a browser preload-data update. Chrome batched a security update, a preload refresh and a 4 GB AI model into the same idle window, as if they were equivalent (page file00000000040c8855). - 24 April 2026, 16:53:22 CEST (14:53:22 UTC) - the unpacked

weights.binis moved to its final location atOptGuideOnDeviceModel/2025.8.8.1141/weights.binalong withadapter_cache.bin,encoder_cache.bin,_metadata/verified_contents.jsonand the execution config. Concurrently four additional model targets (numbered 40, 49, 51 and 59 in Chrome's optimization-guide enum) register fresh entries inoptimization_guide_model_store- these are the smaller text-safety and prompt-routing models that pair with the LLM. None of these targets existed in the profile before this moment (page file00000000040d0f9c).

Total install time, from directory creation to final move: 14 minutes and 28 seconds. Total human action against the profile during that window: none. The audit driver was either dwelling on a third-party home page or transitioning between sites - the unpacker fired in the background while a tab waited for a five-minute timer to expire.

The naming inside that fseventsd record is, if anything, the most damning detail. The temp directory is com.google.Chrome.chrome_chrome_Unpacker_BeginUnzipping.5xzqPo - that prefix com.google.Chrome.chrome_chrome_* is the bundle ID and subprocess naming convention Google Chrome itself uses. It is not com.google.GoogleUpdater.* and it is not com.google.GoogleSoftwareUpdate.*. The writer is Chrome - the browser process the user has installed and trusts to load web pages - reaching into the user's filesystem on its own initiative and laying down a 4 GB ML binary while the foreground tab does something completely unrelated.

Three further pieces of corroborating evidence sit elsewhere on the same machine:

- Chrome's own Local State JSON for the audit profile contains an

optimization_guide.on_deviceblock withmodel_validation_result: { attempt_count: 1, result: 2, component_version: "2025.8.8.1141" }. Chrome ran the model. Thecomponent_versionmatches the version string the fseventsd events recorded as the path component. Two independent witnesses, same artefact. The same block reportsperformance_class: 6, vram_mb: "36864"- Chrome characterised my hardware (read the GPU, read the unified memory total) to decide whether I was eligible for the model push, before any user-facing AI feature surfaced. - Chrome's

ChromeFeatureStatefor the audit profile listsOnDeviceModelBackgroundDownload<OnDeviceModelBackgroundDownloadandShowOnDeviceAiSettings<OnDeviceModelBackgroundDownloadin theenable-featuresblock. The first flag is what triggers the silent download. The second flag is what reveals the on-device AI section inchrome://settings. Both are gated by the same rollout flag - which means that by Chrome's own architecture, the install begins before the user has any settings UI in which to refuse it. The settings page that would let you discover the feature exists is enabled in lockstep with the install - it is design, not oversight. - The GoogleUpdater logs record the on-device-model control component (appid

{44fc7fe2-65ce-487c-93f4-edee46eeaaab}) being downloaded fromhttp://edgedl.me.gvt1.com/edgedl/diffgen-puffin/%7B44fc7fe2-65ce-487c-93f4-edee46eeaaab%7D/...- a 7 MB compressed control file that arrived on 20 April 2026, three days before the audit profile in question was created. That is the upstream control plane: it is profile-independent, it is launched automatically by a LaunchAgent that fires every hour, and the URL is plain HTTP (the integrity is verified by the CRX-3 signature inside the package, not by transport security). The control component gives Chrome the manifest pointing at the actual weights, and Chrome's in-processOnDeviceModelComponentInstaller- a separate code path from GoogleUpdater - then fetches the multi-GB weights direct from Google's CDN.

So we now have a four-way evidence chain - macOS kernel filesystem events, Chrome's own per-profile state, Chrome's runtime feature flags, and Google's component-updater logs - all four agreeing on the same conduct, and the conduct is: a 4 GB AI model arrived on this user's disk without consent, without notice, on a profile that received zero human input, in a window of 14 minutes and 28 seconds, on a Tuesday afternoon.

Reports of the OptGuideOnDeviceModel directory and the weights.bin file have been circulating in community forums for over a year - what is new in 2026 is the scale and the verifiability. Chrome's market share has held above 64% globally [9][10], Chrome's user base is between 3.45 billion and 3.83 billion individuals worldwide depending on which 2026 estimate you trust [9][11], and Google has been rolling Gemini features into Chrome with increasing aggression. The behaviour is no longer affecting a minority of power users on a minority of platforms - it is affecting hundreds of millions of devices, on every desktop OS Chrome ships against.

The Anthropic comparison, point for point

The same dark-pattern playbook. I am repeating my categorisation from the Claude Desktop article [1] because the patterns are identical and that is the point.

1. Forced bundling across trust boundaries. Anthropic installed Claude Desktop, then wrote into Brave, Edge, Arc, Vivaldi, Opera, and Chromium. Google installs Chrome, then writes a 4 GB AI model under the user's profile directory without authorisation. The binary is not Chrome. It is a separately-trained machine-learning model, with a separate purpose, a separate data-protection profile, and a separate consent footprint.

2. Invisible default, no opt-in. No dialogue at first launch. No checkbox in Settings. The model is downloaded; the user finds out about it months later when their disk fills up [5][6][7].

3. More difficult to remove than install. Adding the file took zero clicks. Removing it requires (a) discovering the file exists, (b) understanding what it is, (c) navigating into a hidden user profile path, (d) deleting it (and on Windows, also clearing the read-only attribute first), and (e) accepting that Chrome will silently re-download it on next eligible window unless the user also navigates chrome://flags, enterprise policy, or platform-specific configuration tooling to disable the underlying Chrome AI feature [5]. None of those steps is documented in the place a normal user looks - none of them is even hinted at in default Chrome.

4. Pre-staging of capability the user has not requested. The Nano model exists on the user's disk so that Chrome features that use it can run instantly when the user invokes them. The user has not invoked any of those features. The model still sits there, taking 4 GB.

5. Scope inflation through generic naming. OptGuideOnDeviceModel is internal Chrome jargon for "OptimizationGuide on-device model storage". A user looking at their disk usage, even one who knows roughly what they are looking at, would not match OptGuideOnDeviceModel/weights.bin to "Gemini Nano LLM weights". Accurate naming would be GeminiNanoLLM/weights.bin. Google chose to obfuscate the name.

6. Registration into resources the user has not configured. A user who has not opened Chrome's AI features still gets the model. A user who has opened them once and decided they were not interested still gets the model. The file's presence is decoupled from the user's actual use of any feature it powers.

7. Documentation gap. Google's user-facing documentation about Chrome's AI features does not, with the prominence proportionate to a 4 GB silent download, tell the user that the cost of the feature being available is a 4 GB file appearing on their device. The behaviour is documented in places a curious admin will find. It is not documented in the place a regular user looks before installing Chrome or before Chrome decides to begin pushing the model.

8. Automatic re-install on every run. Same as Claude Desktop. Delete the file, Chrome re-creates it. The user's deletion is treated as a transient state to be corrected, not as a directive to be respected.

9. Retroactive survival of any future user consent. If Google in future starts asking users "would you like Chrome to download a 4 GB AI model", that prompt does not retro-actively legitimise the silent installs that have already happened on hundreds of millions of devices. The damage to the trust relationship is done. The bytes have moved. The atmosphere has been written to.

10. Code-signed, shipped through the normal release channel. This is not test build behaviour. It is Chrome stable.

The "AI Mode" pill is the cherry on top

Here is the part that should make every privacy lawyer in the audience put their coffee down. When Chrome 147 launches against an eligible profile, the omnibox - the address bar at the top of the window, the most visible piece of real estate in the entire browser - renders an "AI Mode" pill to the right of the URL field. A reasonable user, seeing "AI Mode" sitting in their browser's most prominent UI element in 2026, with the well-publicised existence of on-device LLMs in Chrome and a 4 GB Gemini Nano binary already silently installed on their disk, is going to draw what feels like an obvious inference - that the visible AI Mode is using the on-device model, that their queries stay on the device, that the local model is what powers the local-looking surface.

Every part of that inference is wrong. The AI Mode pill in the Chrome 147 omnibox is a cloud-backed Search Generative Experience surface - every query the user types into it is sent over the network to Google's servers for processing by Google's hosted models. The on-device Nano model is not invoked by the AI Mode UI flow at all. They are entirely separate code paths - the most visible AI affordance in the browser does not use the local model the user has been silently given, and the features that do use the local model (Help-Me-Write in <textarea>, tab-group AI suggestions, smart paste, page summary) are buried in textarea-context menus and tab-group right-click menus that the average user will discover, on average, never.

Think about what that arrangement actually is. The user pays the storage cost of the silent install (4 GB on disk, plus the bandwidth of the silent download). The user's most visible AI experience - the pill they actually see and click - delivers no on-device benefit at all because it routes to Google's servers regardless. The on-device model is therefore a sunk cost imposed on the user, with no offsetting transparency benefit at the surface where transparency would matter most. To put it another way - if the on-device install had given the user a clear "your AI Mode queries stay on your device" property, the install would have a defensible privacy framing (worse storage, better data flow). It does not - the install gives Google a future-options resource (the model can be invoked by other Chrome subsystems without further server round-trips) at the user's disk-and-bandwidth expense, while the headline AI surface continues to send the user's queries to Google as before. The local model is a Google-side asset positioned on the user's device - it is not a user-side asset and one could argue it is nothing but sleight-of-hand to hide that actually, the visible AI mode is NOT using the local model.

That arrangement, on its own, engages at least three of the deceptive design pattern families catalogued in EDPB Guidelines 03/2022 [20]. It is misleading information because the visible label "AI Mode" creates a false impression about where processing occurs - the label does not say "cloud-backed" or "queries sent to Google", and a reasonable user with knowledge of on-device AI will infer locality from the proximity of an on-device 4 GB model on their disk. It is skipping because the user is not given a moment to choose between local-only and cloud-backed AI surfaces - both are switched on by the same upstream rollout, with no per-feature consent. And it is hindering because turning AI Mode off does not also remove the on-device install, and removing the on-device install does not turn AI Mode off - the two are separately controlled, and discovering both controls requires knowing about both chrome://flags and chrome://settings/ai, neither of which is obvious in default Chrome.

So: not just a non-consented install, but a non-consented install that doubles as cover for a parallel cloud-backed surface that misrepresents to the user where their typing is being processed. Both layers compound the consent problem.

Why this is unlawful in the EEA and the UK

Article 5(3) of Directive 2002/58/EC (the ePrivacy Directive) prohibits the storing of information, or the gaining of access to information already stored, in the terminal equipment of a subscriber or user, without the user's prior, freely-given, specific, informed, and unambiguous consent, except where strictly necessary for the provision of an information-society service explicitly requested by the user [2]. The 4 GB Gemini Nano weights file is information stored in the user's terminal equipment. The user did not consent. The user has not requested any service that strictly requires a 4 GB on-device LLM. Chrome is functional without the file. The Article 5(3) breach is direct.

Article 5(1) GDPR requires processing of personal data to be lawful, fair, and transparent to the data subject [3]. Where the user's hardware is profiled to determine eligibility for the model push, where the install events are logged on Google's servers, and where the on-device features the model powers process user prompts (whether or not those prompts leave the device), the lawfulness, fairness, and transparency of all of that processing depend on the user being told, in plain language, what is happening. They are not.

Article 25 GDPR requires the controller to implement appropriate technical and organisational measures to ensure that, by default, only personal data that are necessary for each specific purpose are processed [3]. Pre-staging a 4 GB AI model on a user's disk, against a contingency that the user might in future invoke an AI feature, is the architectural opposite of by-default minimisation and the profiling of the device to determine whether or not to push the model is not different to the profiling used to track you online and as such that profile contains personal data and if the AI model is used, will process personal data, so the GDPR arguments are in scope and valid.

Under the UK GDPR and the Privacy and Electronic Communications Regulations 2003, the analysis is the same. Under the California Consumer Privacy Act, the absence of a notice-at-collection covering this specific category of pre-staged software puts Google's CCPA notice posture in question [12].

Then there are the criminal-law violations under various national computer-misuse statutes - which again cannot be overstated.